Materials Graph Library (MatGL), an open-source graph deep learning library for materials science and chemistry

Chen, C., Zuo, Y., Ye, W., Li, X., Deng, Z. & Ong, S. P. A critical review of machine learning of energy materials. Adv. Energy Mater. 10, 1903242 (2020).

Schmidt, J., Marques, M. R. G., Botti, S. & Marques, M. A. L. Recent advances and applications of machine learning in solid-state materials science. npj Comput. Mater. 5, 83 (2019).

Westermayr, J., Gastegger, M., Schütt, K. T. & Maurer, R. J. Perspective on integrating machine learning into computational chemistry and materials science. J. Chem. Phys. 154, 230903 (2021).

Google Scholar

Oviedo, F., Ferres, J. L., Buonassisi, T. & Butler, K. T. Interpretable and explainable machine learning for materials science and chemistry. Acc. Mater. Res. 3, 597–607 (2022).

Chen, C., Ye, W., Zuo, Y., Zheng, C. & Ong, S. P. Graph networks as a universal machine learning framework for molecules and crystals. Chem. Mater. 31, 3564–3572 (2019).

Schmidt, J., Pettersson, L., Verdozzi, C., Botti, S. & Marques, M. A. L. Crystal graph attention networks for the prediction of stable materials. Sci. Adv. 7, eabi7948 (2021).

Google Scholar

Gasteiger, J., Groß, J. & Günnemann, S. Directional message passing for molecular graphs. In International Conference on Learning Representations (ICLR, 2020).

Gasteiger, J., Giri, S., Margraf, J. T., Günnemann, S. Fast and uncertainty-aware directional message passing for non-equilibrium molecules. In 35th Conferenceon Neural Information Processing Systems. vol. 9 6790–6802 (NeurIPS, 2021).

Satorras, V. G., Hoogeboom, E., Welling, M. E(n) equivariant graph neural networks. In Proceedings of the 38th International Conference on Machine Learning. 9323–9332 (ACM, 2021).

Liu, Y. et al. Spherical message passing for 3D molecular graphs. In International Conference on Learning Representations. (ICLR, 2022).

Brandstetter, J., Hesselink, R., van der Pol, E., Bekkers, E. J., Welling, M. Geometric and Physical Quantities improve E(3) Equivariant Message Passing. In International Conference on Learning Representations (ICLR, 2022).

Kaba, S.-O. & Ravanbakhsh, S. Equivariant networks for crystal structures. In Advances in Neural Information Processing Systems (NeurIPS, 2022).

Yan, K., Liu, Y., Lin, Y. & Ji, S. Periodic graph transformers for crystal material property prediction. In Advances in Neural Information Processing Systems (NeurIPS, 2022).

Zhang, Y.-W. et al. Roadmap for the development of machine learning-based interatomic potentials. Modell. Simul. Mater. Sci. Eng. 33, 023301 (2025).

Ko, T. W. & Ong, S. P. Recent advances and outstanding challenges for machine learning interatomic potentials. Nat. Comput. Sci. 3, 998–1000 (2023).

Google Scholar

Unke, O. T. et al. Machine learning force fields. Chem. Rev. 121, 10142–10186 (2021).

Google Scholar

Schütt, K. T., Sauceda, H. E., Kindermans, P.-J., Tkatchenko, A. & Müller, K.-R. SchNet – a deep learning architecture for molecules and materials. J. Chem. Phys. 148, 241722 (2018).

Google Scholar

Schütt, K., Unke, O. & Gastegger, M. Equivariant message passing for the prediction of tensorial properties and molecular spectra. In Proceedings of the 38th International Conference on Machine Learning. 9377–9388 (2021).

Bartók, A. P., Payne, M. C., Kondor, R. & Csányi, G. Gaussian approximation potentials: the accuracy of quantum mechanics, without the electrons. Phys. Rev. Lett. 104, 136403 (2010).

Google Scholar

Behler, J. & Parrinello, M. Generalized neural-network representation of high-dimensional potential-energy surfaces. Phys. Rev. Lett. 98, 146401 (2007).

Google Scholar

Thompson, A. P., Swiler, L. P., Trott, C. R., Foiles, S. M. & Tucker, G. J. Spectral neighbor analysis method for automated generation of quantum-accurate interatomic potentials. J. Comput. Phys. 285, 316–330 (2015).

Drautz, R. Atomic cluster expansion for accurate and transferable interatomic potentials. Phys. Rev. B 99, 014104 (2019).

Batzner, S. et al. E(3)-equivariant graph neural networks for data-efficient and accurate interatomic potentials. Nat. Commun. 13, 2453 (2022).

Google Scholar

Ko, T. W., Finkler, J. A., Goedecker, S. & Behler, J. Accurate fourth-generation machine learning potentials by electrostatic embedding. J. Chem. Theory Comput. 19, 3567–3579 (2023).

Google Scholar

Kocer, E., Ko, T. W. & Behler, J. Neural network potentials: a concise overview of methods. Annu. Rev. Phys. Chem. 73, 163–186 (2022).

Google Scholar

Ko, T. W., Finkler, J. A., Goedecker, S. & Behler, J. A fourth-generation high-dimensional neural network potential with accurate electrostatics including non-local charge transfer. Nat. Commun. 12, 398 (2021).

Google Scholar

Liao, Y.-L. & Smidt, T. Equiformer: equivariant graph attention transformer for 3D atomistic graphs. In International Conference on Learning Representations (ICLR) (ICLR, 2023).

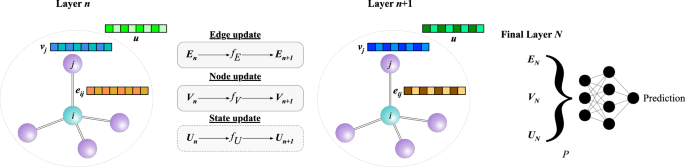

Battaglia, P. W. et al. Relational inductive biases, deep learning, and graph networks. Preprint at (2018).

Chen, C. & Ong, S. P. A universal graph deep learning interatomic potential for the periodic table. Nat. Comput. Sci. 2, 718–728 (2022).

Google Scholar

Chen, C., Zuo, Y., Ye, W., Li, X. & Ong, S. P. Learning properties of ordered and disordered materials from multi-fidelity data. Nat. Comput. Sci. 1, 46–53 (2021).

Google Scholar

Ko, T. W. & Ong, S. P. Data-efficient construction of high-fidelity graph deep learning interatomic potentials. npj Comput. Mater. 11, 65 (2025).

Han, J. et al. A survey of geometric graph neural networks: Data structures, models and applications. Front. Comput. Sci. 19, 1911375 (2025).

Duval, A. et al. A hitchhiker’s guide to geometric gnns for 3d atomic systems. Preprint at (2023).

Batzner, S. et al. E (3)-equivariant graph neural networks for data-efficient and accurate interatomic potentials. Nat. Commun. 13, 2453 (2022).

Google Scholar

Batatia, I., Kovacs, D. P., Simm, G., Ortner, C. & Csányi, G. MACE: Higher order equivariant message passing neural networks for fast and accurate force fields. Adv. Neural Inf. Process. Syst. 35, 11423–11436 (2022).

Liao, Y.-L. & Smidt, T. Equiformer: equivariant graph attention transformer for 3d atomistic graphs. International Conference onLearning Representations (ICLR) (2023).

Wang, Y. et al. Enhancing geometric representations for molecules with equivariant vector-scalar interactive message passing. Nat. Commun. 15, 313 (2024).

Google Scholar

Frank, J. T., Unke, O. T., Müller, K.-R. & Chmiela, S. A Euclidean transformer for fast and stable machine learned force fields. Nat. Commun. 15, 6539 (2024).

Google Scholar

Gasteiger, J., Becker, F. & Günnemann, S. Gemnet: Universal directional graph neural networks for molecules. Adv. Neural Inf. Process. Syst. 34, 6790–6802 (2021).

Fung, V., Zhang, J., Juarez, E. & Sumpter, B. G. Benchmarking graph neural networks for materials chemistry. npj Comput. Mater. 7, 1–8 (2021).

Bandi, S., Jiang, C. & Marianetti, C. A. Benchmarking machine learning interatomic potentials via phonon anharmonicity. Mach. Learn. Sci. Technol. 5, 030502 (2024).

Fu, X. et al. Forces are not enough: Benchmark and critical evaluation for machine learning force fields with molecular simulations. Trans. Mach. Learn. Res. (2023).

Deng, B. et al. CHGNet as a pretrained universal neural network potential for charge-informed atomistic modelling. Nat. Mach. Intell. 5, 1031–1041 (2023).

Park, Y., Kim, J., Hwang, S. & Han, S. Scalable parallel algorithm for graph neural network interatomic potentials in molecular dynamics simulations. J. Chem. Theory Comput. 20, 4857–4868 (2024).

Google Scholar

Batatia, I. et al. A foundation model for atomistic materials chemistry. Preprint at (2024).

Barroso-Luque, L. et al. Open materials 2024 (omat24) inorganic materials dataset and models. Preprint at (2024).

Neumann, M. et al. Orb: a fast, scalable neural network potential. Preprint at (2024).

Pelaez, R. P. et al. TorchMD-Net 2.0: fast neural network potentials for molecular simulations. J. Chem. Theory Comput. 20, 4076–4087 (2024).

Google Scholar

Schütt, K. T., Hessmann, S. S. P., Gebauer, N. W. A., Lederer, J. & Gastegger, M. SchNetPack 2.0: a neural network toolbox for atomistic machine learning. J. Chem. Phys. 158, 144801 (2023).

Google Scholar

Axelrod, S., Shakhnovich, E. & Gómez-Bombarelli, R. Excited state non-adiabatic dynamics of large photoswitchable molecules using a chemically transferable machine learning potential. Nat. Commun. 13, 3440 (2022).

Google Scholar

Fey, M. & Lenssen, J. E. Fast graph representation learning with PyTorch geometric. In Proc. ICLR 2019 Workshop on Representation Learning on Graphs and Manifolds (ICLR, 2019).

Abadi, M. et al. TensorFlow: a system for large-scale machine learning. In Proceedings of the 12th USENIX Conference on Operating Systems Design and Implementation. USA, p 265–283 (ACM, 2016).

Bradbury, J. et al. JAX: composable transformations of Python+NumPy programs. (2018).

Wang, M. Y. Deep graph library: Towards efficient and scalable deep learning on graphs. In ICLR workshop on representation learning on graphs and manifolds (2019).

Huang, X., Kim, J., Rees, B. & Lee, C.-H. Characterizing the efficiency of graph neural network frameworks with a magnifying glass. In 2022 IEEE International Symposium on Workload Characterization (IISWC). pp 160–170 (IEEE, 2022).

Ong, S. P. et al. Python Materials Genomics (pymatgen): a robust, open-source python library for materials analysis. Comput. Mater. Sci. 68, 314–319 (2013).

Larsen, A. H. et al. The atomic simulation environment-a Python library for working with atoms. J. Phys. Condens. Matter 29, 273002 (2017).

Simeon, G. & De Fabritiis, G. Tensornet: Cartesian tensor representations for efficient learning of molecular potentials. Adv. Neural Inf. Process. Syst. 36 (2024).

Vinyals, O., Bengio, S. & Kudlur, M. Order matters: Sequence to sequence for sets. In: Bengio, Y. & LeCun, Y. (eds.) Proc. 4th International Conference on Learning Representations, ICLR 2016, San Juan, Puerto Rico, May 2–4, 2016, Conference Track Proceedings (2016).

Glorot, X. & Bengio, Y. Understanding the difficulty of training deep feedforward neural networks. In Proceedings of the Thirteenth International Conference on Artificial Intelligence and Statistics. pp 249–256 (PMLR, 2010).

He, K., Zhang, X., Ren, S. & Sun, J. Delving deep into rectifiers: surpassing human-level performance on imagenet classification. In 2015 IEEE International Conference on Computer Vision (ICCV). pp 1026–1034 (IEEE, 2015).

Bitzek, E., Koskinen, P., Gähler, F., Moseler, M. & Gumbsch, P. Structural relaxation made simple. Phys. Rev. Lett. 97, 170201 (2006).

Google Scholar

Broyden, C. G., Dennis Jr, J. E. & Moré, J. J. On the local and superlinear convergence of quasi-Newton methods. IMA J. Appl. Math. 12, 223–245 (1973).

Liu, D. C. & Nocedal, J. On the limited memory BFGS method for large scale optimization. Math. Program. 45, 503–528 (1989).

Garijo del Río, E., Mortensen, J. J. & Jacobsen, K. W. Local Bayesian optimizer for atomic structures. Phys. Rev. B 100, 104103 (2019).

Berendsen, H. J. C., Postma, J. P. M., Van Gunsteren, W. F., DiNola, A. & Haak, J. R. Molecular dynamics with coupling to an external bath. J. Chem. Phys. 81, 3684–3690 (1984).

Andersen, H. C. Molecular dynamics simulations at constant pressure and/or temperature. J. Chem. Phys. 72, 2384–2393 (1980).

Schneider, T. & Stoll, E. Molecular-dynamics study of a three-dimensional one-component model for distortive phase transitions. Phys. Rev. B 17, 1302–1322 (1978).

Nosé, S. A molecular dynamics method for simulations in the canonical ensemble. Mol. Phys. 52, 255–268 (1984).

Hoover, W. G. Canonical dynamics: equilibrium phase-space distributions. Phys. Rev. A 31, 1695–1697 (1985).

Liu, R. et al. MatCalc. (2024).

Sugita, Y. & Okamoto, Y. Replica-exchange molecular dynamics method for protein folding. Chem. Phys. Lett. 314, 141–151 (1999).

Adams, D. Grand canonical ensemble Monte Carlo for a Lennard-Jones fluid. Mol. Phys. 29, 307–311 (1975).

Dunn, A., Wang, Q., Ganose, A., Dopp, D. & Jain, A. Benchmarking materials property prediction methods: the Matbench test set and automatminer reference algorithm. npj Comput. Mater. 6, 1–10 (2020).

Ramakrishnan, R., Dral, P. O., Rupp, M. & Von Lilienfeld, O. A. Quantum chemistry structures and properties of 134 kilo molecules. Sci. Data 1, 140022 (2014).

Google Scholar

Wang, A. Y.-T., Kauwe, S. K., Murdock, R. J. & Sparks, T. D. Compositionally restricted attention-based network for materials property predictions. Npj Computational Mater. 7, 1–10 (2021).

Pozdnyakov, S. N. & Ceriotti, M. Incompleteness of graph neural networks for points clouds in three dimensions. Mach. Learn.: Sci. Technol. 3, 045020 (2022).

Smith, J. S., Nebgen, B., Lubbers, N., Isayev, O. & Roitberg, A. E. Less is more: sampling chemical space with active learning. J. Chem. Phys. 148, 241733 (2018).

Google Scholar

Kaplan, A. D. et al. A foundational potential energy surface dataset for materials. Preprint at (2025).

Zhang, S. Exploring the frontiers of condensed-phase chemistry with a general reactive machine learning potential. Nat. Chem. 16, 727–734 (2024).

Google Scholar

Kovács, D. P. et al. Mace-off: Shortrangetransferable machine learning force fields for organic molecules. J. Am. Chem. Soc. 147, 17598–17611 (2025).

Google Scholar

Qi, J., Ko, T. W., Wood, B. C., Pham, T. A. & Ong, S. P. Robust training of machine learning interatomic potentials with dimensionality reduction and stratified sampling. npj Comput. Mater. 10, 43 (2024).

Gonzales, C., Fuemmeler, E., Tadmor, E. B., Martiniani, S., Miret, S. Benchmarking of universal machine learning interatomic potentials for structural relaxation. In AI for Accelerated Materials Design (NeurIPS, 2024).

Yu, H., Giantomassi, M., Materzanini, G., Wang, J. & Rignanese, G.-M. Systematic assessment of various universal machine-learning interatomic potentials. Mater. Genome Eng. Adv. 2, e58 (2024).

Pan, H. Benchmarking coordination number prediction algorithms on inorganic crystal structures. Inorg. Chem. 60, 1590–1603 (2021).

Google Scholar

Loew, A., Sun, D., Wang, H.-C., Botti, S. & Marques, M. A. Universal machine learning interatomic potentials are ready for phonons. npj Comput Mater 11, 178 (2025).

Batatia, I. et al. A foundation model for atomistic materials chemistry. Preprint at (2023).

Zuo, Y. Performance and cost assessment of machine learning interatomic potentials. J. Phys. Chem. A 124, 731–745 (2020).

Google Scholar

Zhao, J. et al. Complex Ga2O3 polymorphs explored by accurate and general-purpose machine-learning interatomic potentials. npj Comput. Mater. 9, 159 (2023).

Chen, Z., Du, T., Krishnan, N. A., Yue, Y. & Smedskjaer, M. M. Disorder-induced enhancement of lithium-ion transport in solid-state electrolytes. Nat. Commun. 16, 1057 (2025).

Google Scholar

Grimme, S., Antony, J., Ehrlich, S. & Krieg, H. A consistent and accurate ab initio parametrization of density functional dispersion correction (DFT-D) for the 94 elements H-Pu. J. Chem. Phys. 132, 154104 (2010).

Google Scholar

Poltavsky, I. et al. Crash testing machine learning force fields for molecules, materials, and interfaces: model analysis in the TEA Challenge 2023. Chem. Sci. 16, 3720–3737 (2025).

Google Scholar

Poltavsky, I. et al. Crash testing machine learning force fields for molecules, materials, and interfaces: molecular dynamics in the TEA challenge 2023. Chem. Sci. 16, 3738–3754 (2025).

Google Scholar

Bihani, V. et al. EGraFFBench: evaluation of equivariant graph neural network force fields for atomistic simulations. Digit. Discov. 3, 759–768 (2024).

Chen, C. et al. Accelerating computational materials discovery with machine learning and cloud high-performance computing: from large-scale screening to experimental validation. J. Am. Chem. Soc. 146, 20009–20018 (2024).

Google Scholar

Ojih, J., Al-Fahdi, M., Yao, Y., Hu, J. & Hu, M. Graph theory and graph neural network assisted high-throughput crystal structure prediction and screening for energy conversion and storage. J. Mater. Chem. A 12, 8502–8515 (2024).

Sivak, J. T. et al. Discovering high-entropy oxides with a machine-learning interatomic potential. Phys. Rev. Lett. 134, 216101 (2025).

Google Scholar

Taniguchi, T. Exploration of elastic moduli of molecular crystals via database screening by pretrained neural network potential. CrystEngComm 26, 631–638 (2024).

Mathew, K. et al. Atomate: a high-level interface to generate, execute, and analyze computational materials science workflows. Computational Mater. Sci. 139, 140–152 (2017).

Miret, S., Lee, K. L. K., Gonzales, C., Nassar, M. & Spellings, M. The open MatSci ML toolkit: a flexible framework for machine learning in materials science. Trans. Mach. Learn. Res. (2023).

te Velde, G. et al. Chemistry with ADF. J. Comput. Chem. 22, 931–967 (2001).

Schwalbe-Koda, D., Hamel, S., Sadigh, B., Zhou, F. & Lordi, V. Model-free estimation of completeness, uncertainties, and outliers in atomistic machine learning using information theory. Nat. Commun. 16, 4014 (2025).

Google Scholar

Musielewicz, J., Lan, J., Uyttendaele, M. & Kitchin, J. R. Improved uncertainty estimation of graph neural network potentials using engineered latent space distances. J. Phys. Chem. C. 128, 20799–20810 (2024).

Podryabinkin, E. V. & Shapeev, A. V. Active learning of linearly parametrized interatomic potentials. Comput. Mater. Sci. 140, 171–180 (2017).

Kulichenko, M. et al. Uncertainty-driven dynamics for active learning of interatomic potentials. Nat. Comput. Sci. 3, 230–239 (2023).

Google Scholar

Park, H., Onwuli, A., Butler, K. T. & Walsh, A. Mapping inorganic crystal chemical space. Faraday Discuss. 256, 601–613 (2025).

Google Scholar

Onwuli, A., Hegde, A. V., Nguyen, K. V., Butler, K. T. & Walsh, A. Element similarity in high-dimensional materials representations. Digital Discov. 2, 1558–1564 (2023).

Landrum, G. et al. RDKit: a software suite for cheminformatics, computational chemistry, and predictive modeling. Greg. Landrum 8, 5281 (2013).

RRuddigkeit, L., Van Deursen, R., Blum, L. C. & Reymond, J.-L. Enumeration of 166 billion organic small molecules in the chemical universe database GDB-17. J. Chem. Inf. Model. 52, 2864–2875 (2012).

Tran, R. Surface energies of elemental crystals. Sci. Data 3, 1–13 (2016).

link

.jpg)