Probing the limitations of multimodal language models for chemistry and materials research

The MaCBench framework

Our benchmark design is guided by the observation that scientific work requires not only access to multiple modalities of information but also the ability to flexibly integrate them. To probe these capabilities of VLLMs meaningfully—rather than creating artificial question–answer-based challenges—we focus on tasks that mirror real scientific workflows, from interpreting scientific literature to evaluating laboratory conditions and analyzing experimental data (see Fig. 1). This approach allows us to evaluate the models’ ability to process different types of information and their capacity to use this information to support scientific discovery. To assess performance in a broad range of settings, we rely on both images we mined from patents but also some we generated from scratch.

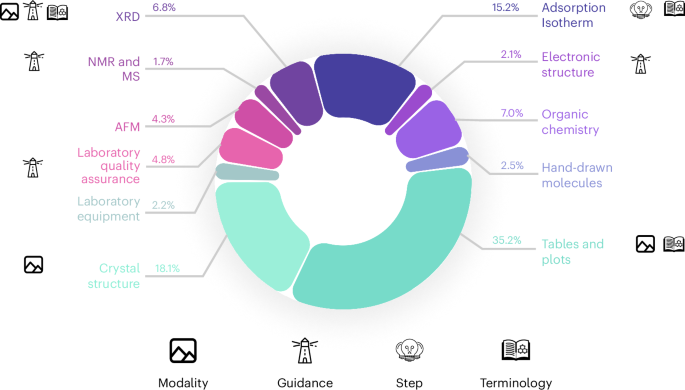

The framework evaluates VLLM performance across three key focus areas: data extraction (teal), in silico and laboratory experiments (purple) and data interpretation (blue). The benchmark includes diverse tasks spanning tables, plots, organic chemistry diagrams, crystal structures, atomic force microscopy (AFM) imaging, spectroscopy and materials characterization. Each task requires domain-specific visual understanding and scientific reasoning, from extracting numerical values to analyzing complex experimental set-ups and interpreting spectroscopic data. Credit: icons, Rainy Ting, svgrepo.com.

The benchmark is structured around three key aspects that form the basis of many scientific workflows: information extraction, in silico or laboratory experiments, and data interpretation. Within each pillar, we include tasks spanning various scientific activities (see Fig. 2). The information extraction pillar analyzes the performance in parsing scientific literature, including extracting data from tables and plots, and interpreting chemical structures. The experiment execution pillar evaluates the models’ ability to understand laboratory safety, identify equipment, assess safety conditions and understand crystal structures (as potential simulation artifacts). The data interpretation pillar tests models’ capability to analyze various types of scientific data, from spectral analysis to electronic structure interpretation.

MaCBench comprises eleven distinct topics with their respective proportions, ranging from tables and plots (35.2%) to mass spectrometry (MS) and nuclear magnetic resonance (NMR) analysis (1.7%). Each segment is annotated with relevant icons indicating the ablations we conducted on those tasks: modality understanding (image icon), guidance requirements (lighthouse icon), reasoning steps (lightbulb icon) and terminology complexity (book icon). The chart illustrates the benchmark’s comprehensive coverage of chemistry and materials tasks.

Source data

Here, a task refers to a single prompt template containing multiple questions. A task can either be a multiple-choice question (MCQ) or a numeric-answer question. The current corpus has 779 MCQs and 374 numeric-answer questions. A topic is a collection of tasks related to the same topic (one topic can have different types of tasks related to that topic; for example, X-ray diffraction (XRD) can have multiple tasks related to identifying peak positions, and then another set of tasks related to ordering peak positions in ascending/descending order). The three overarching focus areas are data extraction, data interpretation and experiments, each encompassing multiple topics.

Performance landscape

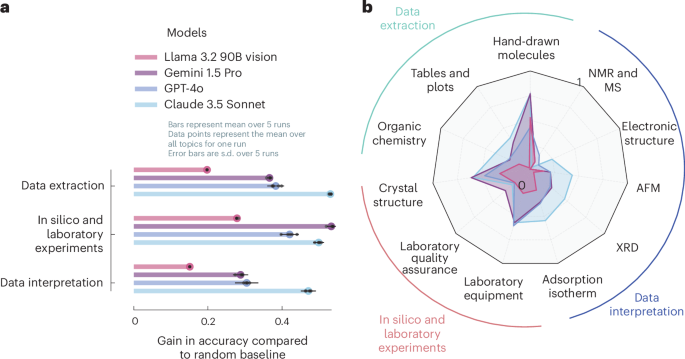

There is considerable variation in model performance across different task types and modalities (Fig. 3; see Supplementary Table 1 for detailed descriptions of all tasks); however, when averaged over different tasks, Claude 3.5 Sonnet is the leading model on all three task families. Notably, the models do not fail at one specific part of the scientific process but struggle in all of them, suggesting that broader automation is not hindered by one bottleneck but requires advances on multiple fronts. Interestingly, even for a foundational pillar of the scientific process—that is, data extraction—some models do not perform much better than random guessing (for instance, Llama 3.2 90B Vision in Fig. 3). Current systems tend to perform best on MCQ-based perception tasks (for example, laboratory equipment and hand-drawn molecules in Fig. 3).

a, Accuracy gains versus random baselines across three focus areas, showing the varying performance of Claude 3.5 Sonnet, GPT-4o, Gemini 1.5 Pro and Llama 3.2 90B Vision when averaged across all tasks in the three MaCBench focus areas: data extraction, experimental understanding and interpretation. We show performance as a fraction of correctly answered questions relative to a random baseline. A performance of 0 means that the model is indistinguishable from random guessing. The error bars indicate the s.d. of the fraction of correctly answered questions over five different runs. b, Radar plot demonstrating the relative model performance across topics. Again, we show the fraction of correctly answered questions relative to a random baseline (the plots without the normalization are shown in Supplementary Fig. 2).

Source data

Data extraction

Our analysis shows that the first step of the scientific workflow, that is, data extraction, already poses considerable challenges for the models we tested. This is particularly the case when extracting science-specific data, for instance, on organic reactions and molecules. Although the best models perform well at extracting information on reaction diagrams, they fail to correctly describe the relationship between isomers (see Supplementary Fig. 4). As discussed below, this is probably caused by models struggling with spatial reasoning. Furthermore, even the extraction of compositions from tables still shows room for improvement for the VLLMs we tested (average accuracy of 0.53), performing indistinguishably from random guessing for Llama 3.2 90B Vision.

In silico and laboratory experiments

A similar variance in performance is observed for tasks related to the execution of laboratory or in silico experiments. Although models show good performance at recognizing laboratory equipment (average accuracy of 0.77), reasoning about laboratory scenarios, for example, comparing the safety hazards of two similar laboratory set-ups, exhibits low performance (average accuracy of 0.46).

The disparity between equipment identification and safety assessment performance suggests that although models can learn to recognize standard laboratory equipment, they still struggle with the more complex reasoning required for safe laboratory operations, questioning their ability to assist in real-world experiment planning and execution. This finding also implicates that current models cannot bridge gaps in tacit knowledge frequently discussed in biosafety scenarios44,45.

Furthermore, the interpretation of crystal structure renderings—a crucial step for in silico experiments—demonstrates performance that is indistinguishable from random guessing in some cases, for example, in the assignment of space groups (see Supplementary Fig. 3).

Data interpretation

Interpreting experimental results often proves challenging for all models, including Claude 3.5 Sonnet. Although most models can interpret capacity values (average accuracy of 0.59), compare Henry constants from metal–organic framework isotherms (average accuracy of 0.83), or interpret amorphous or crystalline systems from XRD with acceptable performance (average accuracy of 0.69), they struggle to interpret AFM images (average accuracy of 0.24) and often fail with tasks that involve measurements such as width and length (despite the presence of clear legends). They also fail to reliably interpret mass spectrometry and nuclear magnetic resonance spectra (average accuracy of 0.35), or to make inferences on the XRD pattern. In the XRD case, it is particularly striking that although some models perform very well at identifying the positions of the most intense reflections, they perform poorly in determining relative orderings, which is crucial for interpreting XRD patterns.

Understanding model limitations

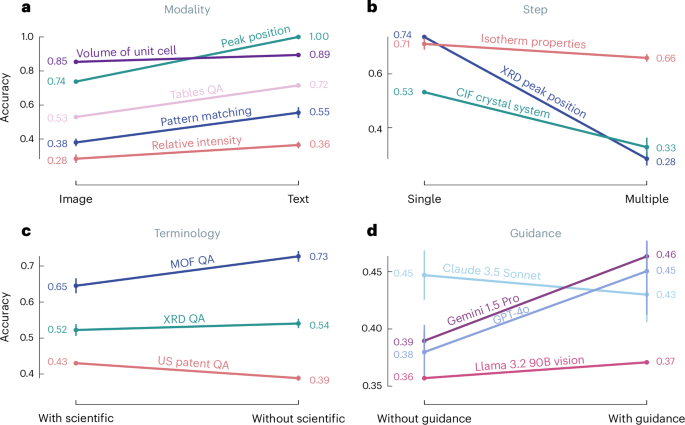

We designed a comprehensive suite of ablation studies to further understand the failure modes of VLLMs. Our approach isolates specific aspects of scientific tasks, from the complexity of the reasoning required, to how the information is presented. We probe two distinct categories of limitations (Fig. 4): first, core reasoning limitations that seem fundamental to current model architectures or training approaches or datasets, and second, sensitivities to inference choices.

a, Modality analysis compares performance between image- and text-only inputs across different task types, with typically higher performance when the same information is shown in text form. b, Step complexity analysis demonstrates performance degradation as tasks require multiple reasoning steps. c, Terminology impact shows how scientific language specificity affects model accuracy, comparing performance with and without domain-specific terminology. We found the behavior on US patent quality assurance (QA) to be mostly due to the sensitivity of Gemini 1.5 Pro to the prompt template (see Supplementary Section 6). d, The guidance study compares performance across different VLLMs with and without additional task guidance, revealing model-specific sensitivity to prompting strategies. For each task, we calculated the mean score and s.d. across five independent runs. Points and error bars represent the mean and s.d. over five independent runs. respectively. We averaged the mean scores and s.d. for each task to summarize performance across models. We employed a two-step averaging process for topics (for example, ‘XRD QA’, ‘Isotherm QA’, ‘Tables QA’). We averaged the scores and s.d. across the tasks for each model. We then averaged these model-specific averages across all models to obtain the final mean score and s.d. for the topic. For guidance analysis, performance was measured as the mean score across five independent runs, and the variability was quantified using the s.d. of those runs. To obtain an overall measure of performance and variability for each side (with and without guidance), we calculated the mean score and the mean s.d. across all tasks within each side.

Source data

Core reasoning limitations

Some limitations seem to be intrinsic to current model architectures and are unlikely to be overcome regardless of how tasks are presented or prompted. These fundamental constraints manifest in three key areas.

Spatial reasoning

Although one might expect VLLMs to excel at processing spatial information, our results reveal substantial limitations in this capability. For example, although models achieve high performance in matching hand-drawn molecules to simplified molecular input line-entry system (SMILES) strings (average accuracy of 0.80, four-times better than baseline), they perform almost indistinguishably from random guessing at naming the isomeric relationship between two compounds (for example, enantiomer, regioisomer, average accuracy of 0.24, which is only 0.1 higher than the baseline accuracy) and when assigning stereochemistry (average accuracy of 0.24, baseline of 0.22). Similarly, models perform well in simple perception tasks on crystal structures (for instance, when counting the number of different species, average accuracy of 0.85) but struggle at assigning the crystal system (average accuracy of 0.55) or space groups (average accuracy of 0.45).

These performance drops for tasks requiring spatial reasoning suggest that current VLLMs cannot reliably be used for any tasks requiring this capability—even though this might be one of the most intuitive use cases of these models.

Synthesis across modalities

Given that models consume visual and textual input in seemingly similar ways, one might expect that the same information is processed in the same way regardless of how it is presented to the model.

We presented identical text and image information to probe the ability of models to integrate information across modalities. In Fig. 4 we find that for all tasks in which we show the same information, the performance in the text modality is better than when the information is provided as an image. A striking example emerges when identifying the peak position in XRD. Models show a nearly 35% increase in performance when presented with the same peak positions as text versus showing the peaks visually. Even when calculating the volume of crystal structures, models differ in performance by four percentage points when presented with the structural information in visual (unit cell parameters shown in the image) and textual (unit cell parameters shown in text) forms. These results suggest that current models have not yet developed robust strategies for cross-modal information synthesis.

Multi-step reasoning

Motivated by the fact that the overall performance analysis indicated that perception tasks tended to perform best, we designed experiments in which we probe—with the same inputs46—performance on very similar tasks, but with varying numbers of reasoning steps (or different numbers of tool calls when implemented in an agentic framework).

Our analysis reveals consistent degradation in performance as tasks require more reasoning steps. Figure 4 shows that in all of our experiments, the tasks that require multiple steps perform substantially worse than those requiring only one. For instance, in XRD pattern analysis, models perform much better at identifying the highest peak than at ranking relative peak intensities (average accuracy of 0.74 for identification of the highest peak versus 0.28 for ranking). Similarly, for the interpretation of adsorption isotherms, the accuracy in finding the highest value notably exceeds the performance of ordering multiple values. This pattern suggests fundamental limitations in chaining logical steps—a crucial capability for scientific reasoning.

Sensitivity to inference choices

Although addressing these core limitations will require novel training approaches, we also identified several factors that substantially influence model performance through inference choices rather than fundamental capabilities. Those factors present an actionable way to improve the performance of current systems directly without retraining them.

Scientific terminology

One might hypothesize that models struggle with some tasks because they are unfamiliar with the scientific terminology used in the questions. Figure 4 shows that removing scientific terminology improves performance across some tasks, including the analysis of adsorption isotherms of metal–organic frameworks, XRD pattern interpretation. Similarly, using International Union of Pure and Applied Chemistry (IUPAC) names instead of SMILES notation for chemical compound identification leads to better results. This suggests models might be overly sensitive to specific technical vocabularies rather than understanding underlying concepts. In fact, some models such as Gemini 1.5 Pro (and the surrounding refusal mechanisms) are very sensitive to the exact wording of the prompt. In Supplementary Section 6, we show that for some questions, large variations in performance can be due to apparently minor changes in prompt wording, such as replacing the word ‘image’ with ‘diagram,’ ‘plot,’ ‘figure,’ ‘photograph’, or even omitting it entirely.

Guidance following

Given that chemists receive instructions on interpreting various experimental characterizations, we hypothesized that similar guidance might also help the models perform better on such tasks. Interestingly, adding step-by-step instructions improves performance for most models in spectral analysis, electronic structure interpretation and XRD pattern matching—with the notable exception of Claude 3.5 Sonnet, whose performance does not improve when provided with guidance. This variation in response to instruction suggests different underlying approaches to problem solving across models.

Performance as a function of frequency on the Internet

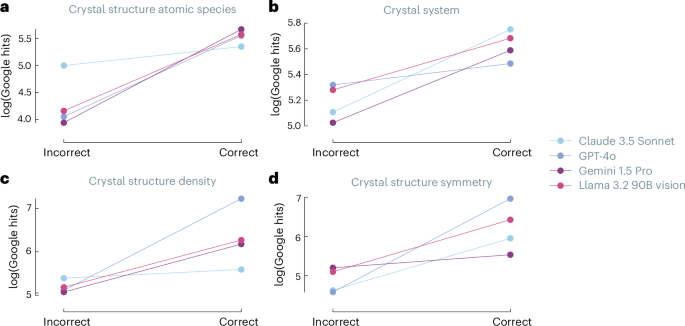

The varying impact of guidance across models led us to investigate whether models truly engage in scientific reasoning or primarily match patterns from their training data46. To probe this question, we measured the number of Google search results for various crystal structures as a proxy for the frequency of those structures in the training corpus (Fig. 5).

a–d, The plots compare four leading VLLMs across different crystallographic tasks: atomic species identification (a), crystal system classification (b), crystal structure density calculations (c) and crystal symmetry determination (d). For each property, log-scale Google hit counts are plotted against the binary correctness (correct/incorrect) of model responses, with lines serving as visual aids only, revealing correlations between answer accuracy and the prevalence of information in online sources. Higher hit counts for correct answers suggest models may not solely rely on reasoning in their responses to crystal structure analysis tasks.

Source data

Our analysis reveals a correlation between the prominence of crystal structures on the Internet and task performance. Figure 5 shows that for all cases in our benchmark, the structures for which the models solve the tasks are more prominent on the Internet. This suggests that models might rely more on pattern matching than genuine scientific reasoning. Interestingly, we observe this effect even for tasks that depend solely on perception, such as counting the number of distinct atomic species.

Toward robust multimodal assistants

Our analysis reveals the promise and limitations of state-of-the-art VLLMs in scientific tasks. Compared with text-only benchmarks such as that of Mirza and colleagues40, we observe much higher performance variability across tasks, suggesting that multimodal systems are more fragile than LLMs. This fragility manifests in several ways: the striking performance gap between visual and textual representations of identical information indicates incomplete integration of modalities, whereas the strong correlation between model performance and the Internet presence of specific crystal structures raises questions about true reasoning capabilities versus pattern matching. The sensitivity to prompting choices (see Supplementary Section 6) and the counterintuitive finding that guidance can degrade performance for top models further underscore reliability concerns; however, our findings also point to actionable paths forward. Many observed limitations, particularly in spatial reasoning, could be addressed through synthetic training data generation. When pursuing such approaches, we recommend incorporating generalization tests (for example, evaluating spatial reasoning on larger compounds than those in training47) to ensure robust capability development. Furthermore, the substantial performance differences between modalities suggest opportunities for improved training strategies, such as incorporating modality transformation tasks (for example, automated conversion between spectral data representations). These targeted interventions could help bridge the gap between current capabilities and the needs of scientific workflows. Looking forward, it is also important to note that for future workflows, with advanced data management48 or self-driving laboratories49, some of the tested multimodal integration abilities will be less important as data will directly be available in a machine-actionable form instead of requiring parsing from an image.

link

.jpg)